Overview

What Is AI Inference?

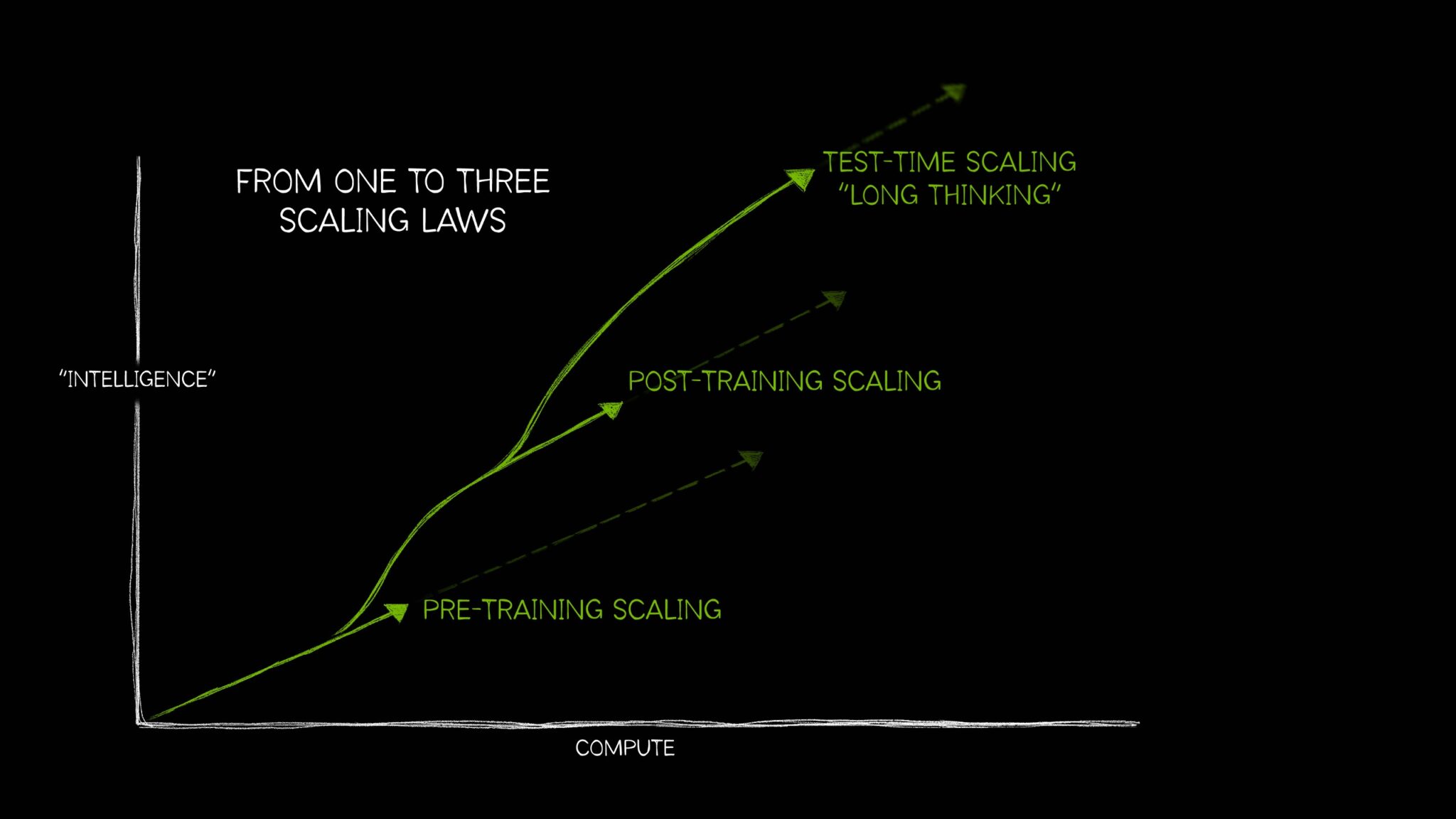

AI inference is where pretrained AI models are deployed to generate new data and is where AI delivers results, powering innovation across every industry. AI models are rapidly expanding in size, complexity, and diversity—pushing the boundaries of what’s possible. For the successful use of AI inference, organizations need a full-stack approach that supports the end-to-end AI life cycle and tools that enable teams to meet their goals.

Benefits

Explore the Benefits of NVIDIA AI for Accelerated Inference

Standardize Deployment

Standardize model deployment across applications, AI frameworks, model architectures, and platforms.

Integrate and Scale With Ease

Integrate easily with tools and platforms on public clouds, on-premises data centers, and at the edge.

Lower Cost

Achieve high throughput and utilization from AI infrastructure, thereby lowering costs.

High Performance

Experience industry-leading performance with the platform that has consistently set multiple records in MLPerf, the leading industry benchmark for AI.

Software

Explore Our AI Inference Software

NVIDIA AI Enterprise consists of NVIDIA NIM™, NVIDIA Triton™ Inference Server, NVIDIA® TensorRT™, and other tools to simplify building, sharing, and deploying AI applications. With enterprise-grade support, stability, manageability, and security, enterprises can accelerate time to value while eliminating unplanned downtime.

Hardware

Explore Our AI Inference Infrastructure

Get unmatched AI performance with NVIDIA AI inference software optimized for NVIDIA-accelerated infrastructure. The NVIDIA H200, L40S, and NVIDIA RTX™ technologies deliver exceptional speed and efficiency for AI inference workloads across data centers, clouds, and workstations.

Apresentando o NVIDIA Project DIGITS

O NVIDIA Project DIGITS traz o poder da Grace Blackwell para desktops de desenvolvedores. O GB10 Superchip, combinado com 128 GB de memória unificada do sistema, permite que pesquisadores de IA, cientistas de dados e estudantes trabalhem com modelos de IA localmente com até 200 bilhões de parâmetros.

Use Cases

How AI Inference Is Being Used

See how NVIDIA AI supports industry use cases, and jump-start your AI development with curated examples.

-

Digital Humans

-

Content Generation

-

Biomolecular Generation

-

Fraud Detection

-

AI Chatbot

-

Security Vulnerability Analysis

Digital Humans

NVIDIA ACE is a suite of technologies that help developers bring digital humans to life. Several ACE microservices are NVIDIA NIMs—easy-to-deploy, high-performance microservices, optimized to run on NVIDIA RTX AI PCs or NVIDIA Graphics Delivery Network (GDN), a global network of GPUs that delivers low-latency digital human processing to 100 countries.

Customer Stories

How Industry Leaders Are Driving Innovation With AI Inference

Resources

The Latest in AI Inference Resources

-

Blogs

-

Sessions

-

Training

-

Videos

Next Steps

Ready to Get Started?

Explore everything you need to start developing your AI application, including the latest documentation, tutorials, technical blogs, and more.

Get in Touch

Talk to an NVIDIA product specialist about moving from pilot to production with the security, API stability, and support of NVIDIA AI Enterprise.

Get the Latest on NVIDIA AI

Sign up for the latest news, updates, and more from NVIDIA.